Think Of A Word. You have Just Produced A Future Voice First Command

Today at Facebook F8 Regina Dugan, a former DARPA executive and current head of Facebook’s research and development lab, with her team of 60 scientists in Building 8 have released information on a non invasive way to build brain/computer interfaces meant to let you “communicate using only your mind”.

This all sounds very far off, perhaps dystopian scary, and in some ways it is, but not as far as many would think, perhaps less than 10 years and it may not be all that scary. The fundamental premise is simple; your words are the best computer interface. Facebook now concedes this and they will find ways to maximize spoken words and and “Silent word” that you can say in your mind to become the ultimate computer interface.

This isn’t about decoding random thoughts. This is about decoding the words you’ve already decided to share by sending them to the speech center of your brain. Think of it like this as you send words to your speech center, you have already edited out the thought process and are sending only the product of your thoughts.

~—~

~—~

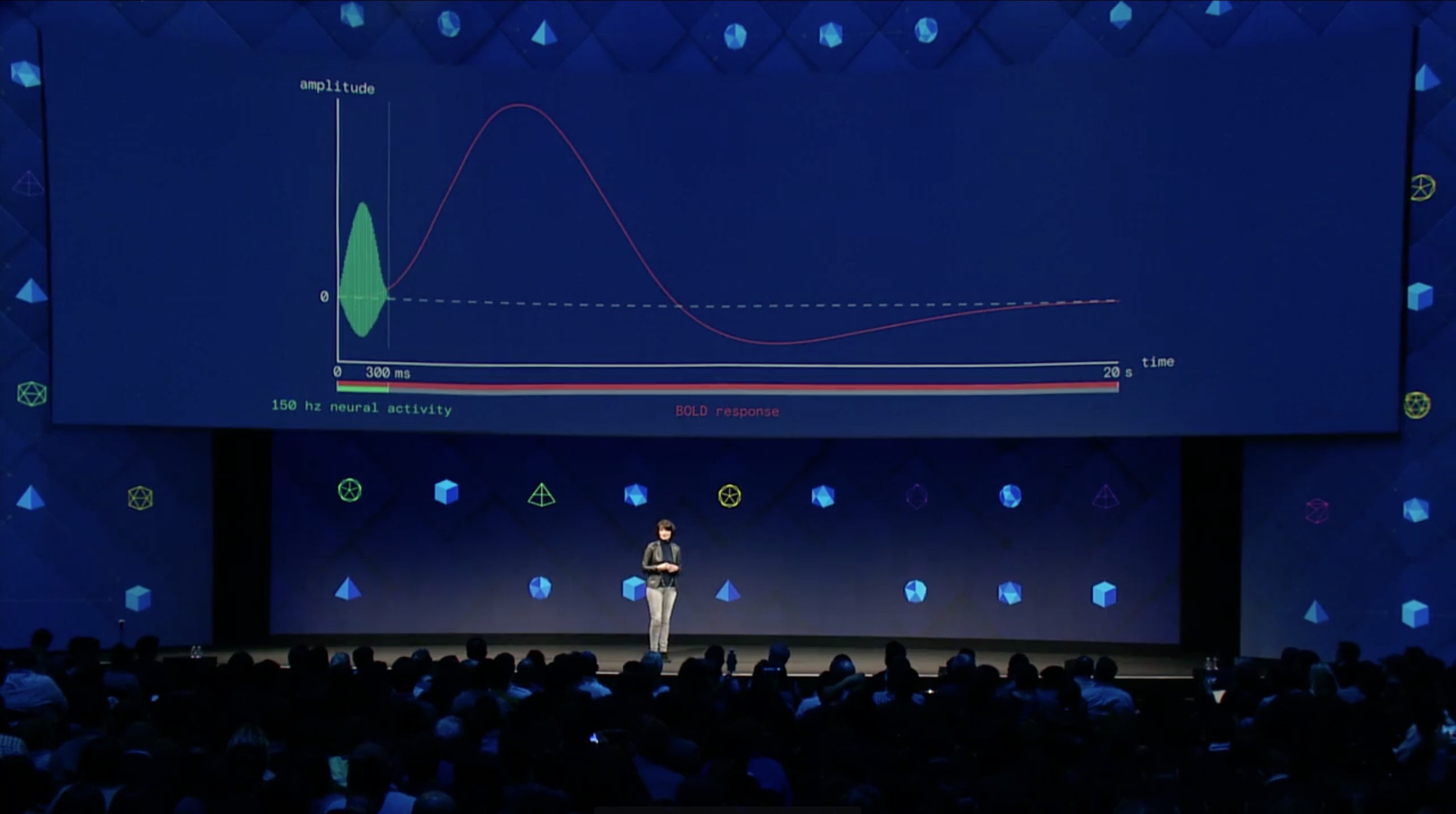

I have privately spoke of what I call Silent Voice First interfaces for over 10 years. Most recently I gave a symposium to a large VC firm on Sand Hill Road and I spent some time on this topic. The concept is simple in that we all say the words we speak in our minds before we speak them. In some situations, perhaps not as many as we imagine, we may find the need to just think the words we want to say to our Voice First Personal Assistants rather than speaking them aloud. Facebook identified this issue and is building the new systems around the old science of hemispheric brain energy scans surfacing the speech centers and through AI and Machine Learning (ML) using this data to extract word intents.

Regina Dugan talk was riveting to anyone unfamiliar with Voice First research. Here are some of the highlights of the event are below:

- Facebook is working to develop a brain-computer interface that will, in the future, allow individuals to communicate with other people without speaking. Ultimately, they hope to develop a technology that allows individuals to “speak” using nothing but their thoughts—unconstrained by time or distance.

- They want to create category defining products that are Social First of course using Voice First as the primary input system. Products that allow us to form more human connections and, in the end, unite the digital world of the internet with the physical world and the human mind.

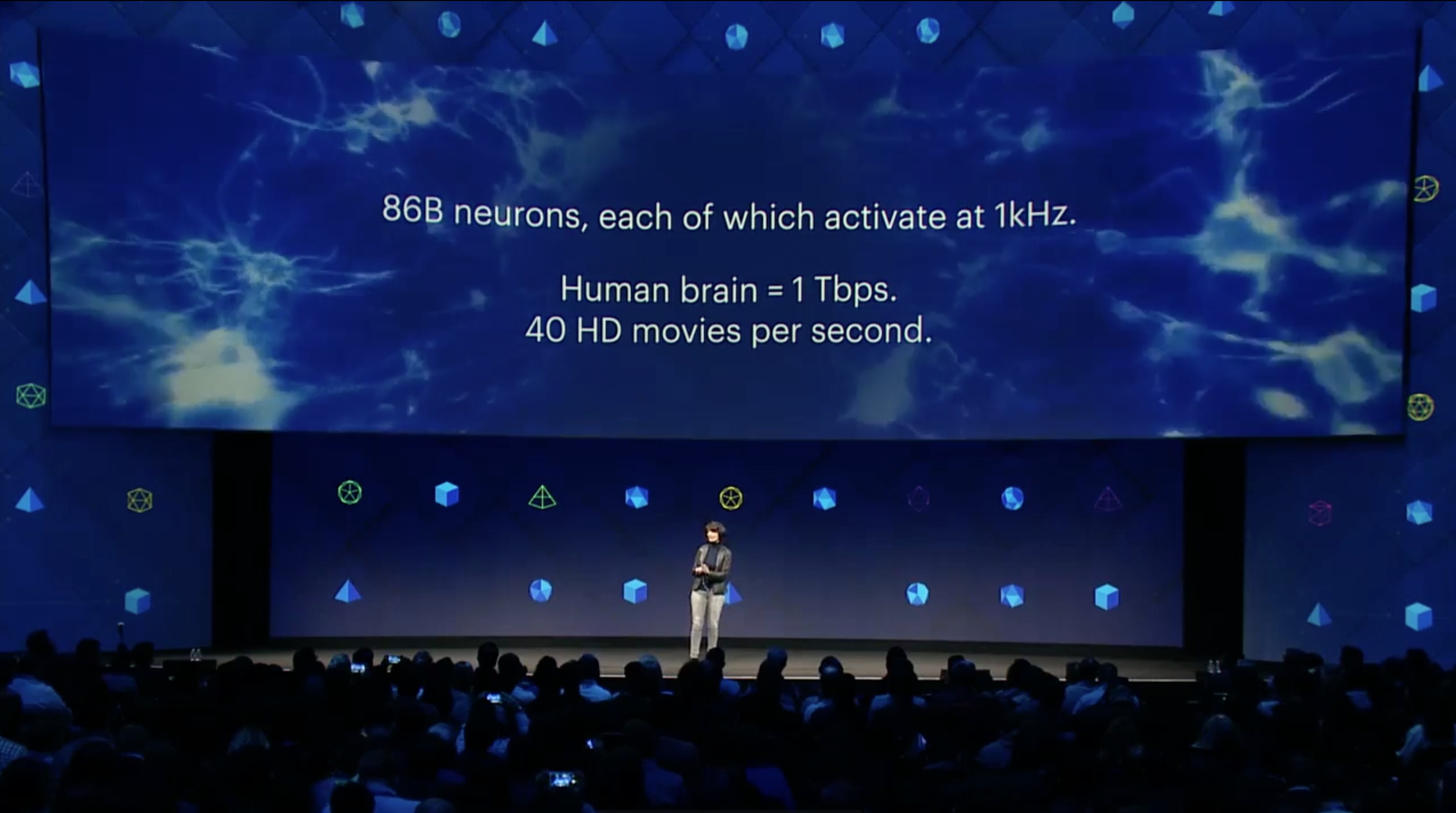

- The brain produces about 1 terabyte per a second, through speech, we can only transmit information to others at about 100 bytes per a second. Facebook wants to get all of that information out of the “brain” and into the world. Typing is not going to be useful in this new world. The average person types between 38 and 40 words per minute. We can speak at 100 words a minute and using our thoughts we can match this speed. That’s far, far faster than most humans can type on a computer or device.

- The goal is to allow people to type 5 times faster than people can type on a smartphone straight from their brain. This means that they are developing technologies that can “read” the human brain in order to transmit this information.

- Next, they will work to allow people to “type”

- Building 8 is working on technology that will allow all humans to type and click through our brains in order to interact with computers. This is sort of a brian touch screen metaphor. This tech is a magnitude more complex than decoding Silent Words.

- They have developed actuators that allow people to “hear” through their skin. Ultimately the technology will allow humans can “feel” words. Eventually you will think something and send the thought to someone’s skin. Additionally, this will allow people to think something in one language and have a person receive the thought in an entirely different language.

~—~

~—~

University Research And Partnerships

“We just want to be able to get those signals right before you actually produce the sound so you don’t have to say it out loud anymore”—Mark Chevillet, Facebook Building 8

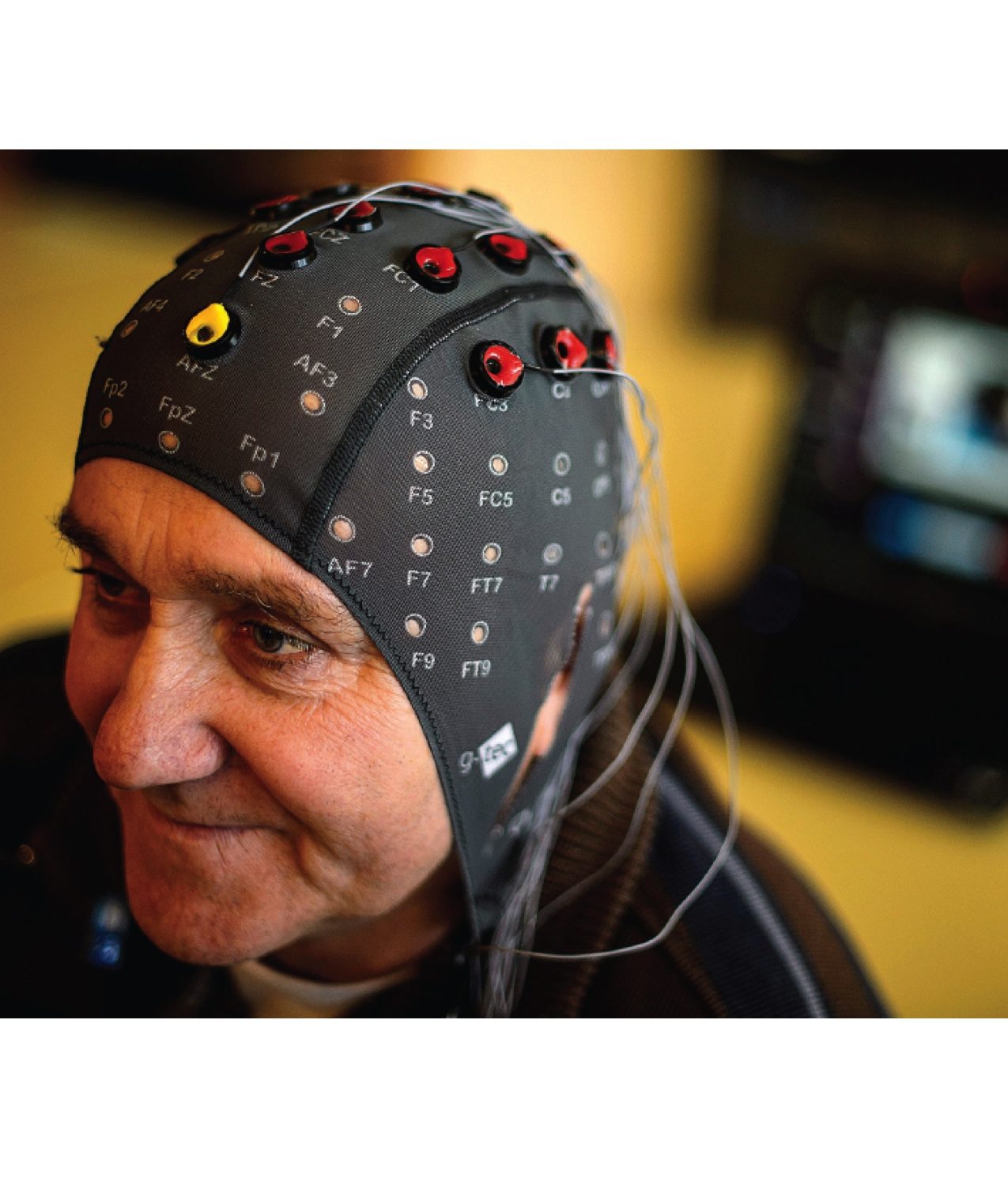

Facebook will collaborating with Johns Hopkins, the University of California, Berkeley, and the University of California, San Francisco on the project, the research will focus on finding a way to use light, like LEDs or lasers, to sense neural signals emanating from the cerebral cortex. The current method works in a way that is similar to how functional near-infrared spectroscopy is currently used to measure brain activity. However it will be used to model the speech centers in the brain to convert the signals into patterns the AI and ML can decode into speech.

This headband lined with low powered lasers can see brain activity and allow to ”think” a message rather an typing it, or send a text in the middle of a conversation. Chevillet said there are already some good demonstrations of brain-computer interfaces, like a recent study in which three people with paralysis were able to use their minds to select letters using an on-screen cursor, one of them typing at eight words per minute. In that study, a brain implant inside the brain recorded neural signals connected. Others have experimented with trying to interpret what sounds people are making or thinking about.

Speaking Through Your Skin

The second project will focus on making it possible to for people to recognize words with their skin, draws inspiration from Braille and Tadoma—a method of communication used with some people who are both deaf and blind where they place a hand on the face of another person to feel the vibrations and air as that person speaks. Researchers built a device with 16 actuators on it and strapped it to an engineer’s arm. Another engineer had a tablet computer with nine different words on its display; as he tapped the different words—like “grasp,” “black,” and “cone”—she felt vibrations on her arm that corresponded with the words and was able to correctly interpret that she needed to pick up a black cone on the table in front of her. The researchers are taking a spoken word, like black and separating it into its frequency components, then delivering those frequencies to the actuators on her arm.

Building 8 researchers think of this as a way to deliver language on the skin that eventually people will be able to use this method to distinguish between about 100 words—or more. They may also use non-verbal signals like pressure and temperature. The idea is to eventually have a wearable that sends messages you can feel, without having to take your phone out and, say, interrupt an in-person conversation you’re having with someone.

The Voice First Revolution, Part Of It Will Be Silent

It is refreshing to see this grade of useful research shared. I have kept silent in public about Silent Voice First for a lot of reasons ironically I could not speak of. Now I can address this in a more direct way in public, in the future. It is abundantly clear that the researchers at Building 8 are already meeting with robust results. The idea that we will need to text with our thumbs to get things done is archaic and will be looked upon as quaint in perhaps 10 years or less.

I have called this the Voice First revolution [1] and today we can now say part of the Voice First revolution will be silent.

_____

[1] https://techpinions.com/there-is-a-revolution-ahead-and-it-has-a-voice/45071

~—~

~—~

Subscribe ($99) or donate by Bitcoin.

Copy address: bc1q9dsdl4auaj80sduaex3vha880cxjzgavwut5l2

Send your receipt to Love@ReadMultiplex.com to confirm subscription.

IMPORTANT: Any reproduction, copying, or redistribution, in whole or in part, is prohibited without written permission from the publisher. Information contained herein is obtained from sources believed to be reliable, but its accuracy cannot be guaranteed. We are not financial advisors, nor do we give personalized financial advice. The opinions expressed herein are those of the publisher and are subject to change without notice. It may become outdated, and there is no obligation to update any such information. Recommendations should be made only after consulting with your advisor and only after reviewing the prospectus or financial statements of any company in question. You shouldn’t make any decision based solely on what you read here. Postings here are intended for informational purposes only. The information provided here is not intended to be a substitute for professional medical advice, diagnosis, or treatment. Always seek the advice of your physician or other qualified healthcare provider with any questions you may have regarding a medical condition. Information here does not endorse any specific tests, products, procedures, opinions, or other information that may be mentioned on this site. Reliance on any information provided, employees, others appearing on this site at the invitation of this site, or other visitors to this site is solely at your own risk.

Copyright Notice:

All content on this website, including text, images, graphics, and other media, is the property of Read Multiplex or its respective owners and is protected by international copyright laws. We make every effort to ensure that all content used on this website is either original or used with proper permission and attribution when available.

However, if you believe that any content on this website infringes upon your copyright, please contact us immediately using our 'Reach Out' link in the menu. We will promptly remove any infringing material upon verification of your claim. Please note that we are not responsible for any copyright infringement that may occur as a result of user-generated content or third-party links on this website. Thank you for respecting our intellectual property rights.