A Field Of View

One of the easiest misconceptions with Voice First is that it is assumed there is just one basic modality: far-field devices. This is natural since the first Voice First device was Amazon’s Echo. However these are more. I have surfaced ~32 primary Voice First modalities. I will talk about two:

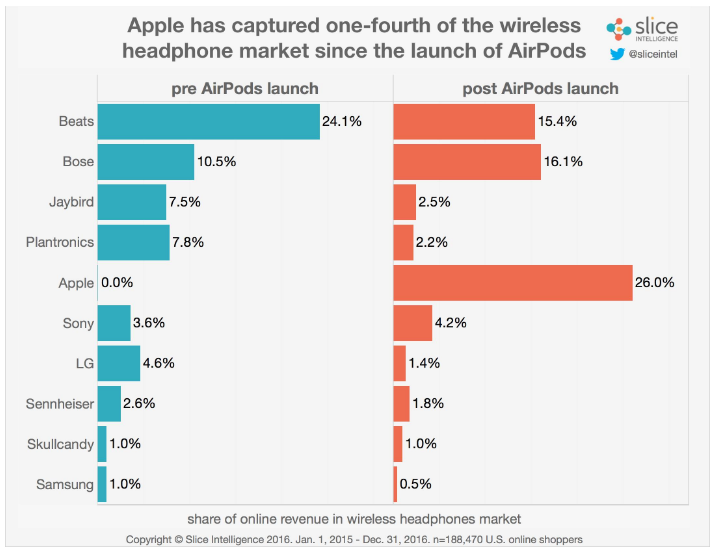

- Near-field: AirPods + Siri

- Far-field: Echo + Alexa

The distinction is not academic. Each modality has value and in some cases some apps and solutions can be useful under both circumstances.

The Far-Field

There are an array of attributes and interactions that are not appropriate for the far-field. For example, reading personal messages or delivering sensitive information that is not desired to be spoken in even an empty room with just you present for fear it could be overheard. Certainly not all information falls into this category but a material amount does.

For the far-field there are interactions that make much better sense where they may be general, less personal questions being asked. Additionally when group there is a group present there are modalities that make little sense for a near-field Voice First system.

The Amazon Echo, released in late 2014 has had a large head start defining some of the elements of how a far-field. The fundamental elements:

- Is touch-less

- Is always on

- Is internet connected

The Echo and Alexa has defined some of the best and worse aspects of the VoiceOS’s UI and UX. Because it is an early Voice First system, it has made some mistakes on how a far-field system should react to some questions and requests. One example is friction-free Voice Commerce. In the current default, Alexa will dutifully take a request to conduct a transaction with Amazon with-out any barriers. Thus an apparant current quagmire for friction-free Voice Commerce. However in my research, I have been able to create ways to solve this issue with no ridiculous additional verifications or steps.

The far-field has a range of great benefits. To me it defines the physical space and once you have become used to talking to a particular room where it lives, you expect it and the assumption it is always there and ready creates a rich interactive environment of dialogs.

~-~

~-~

The Near-Field

The near-field Voice First environment is currently in a an easier stage than the far-field. Some may argue that Siri on an iOS device or Google Now, Cortana and a few others are near-field. However I do not fully classify these systems on most smartphone as truly near-field. They are part of a mid-field mix. One of the fundamental defining points is the delivery system is primarily in-ear. It is true you can lift the phone to the ear and get this response in-ear, it has mechanical and cognitive processes that slow down the interaction. Much like pressing a button to talk to a far-field system has.

The basis characteristic of a near-field Voice First device is the same as far-field devices:

- Is touch-less

- Is always on

- Is internet connected

A true near-field system is always on, always connected and can transmit responses to an in-ear device. Currently the best example of this is Siri and AirPods. But not fully—yet. AirPods still require a tap on the device to have the system wake. There are a few reasons for this but they are more philosophical rather than technical. Each AirPod has more computing power than an iPhone 1. It is entirely possible with a mandate to make AirPods a true VoiceOS platform to have a battery saving always-only “hey Siri” wake word. Of course, this will cost battery time, by perhaps 20%-50%, however in a near-field, Voice First modality you may only use one AirPod at a time. When you add the the time extension from 2 AirPods and the charging capacity of the battery case, you could have 12 hours of near-field Voice First with the current AirPod technology. The Cortex processor would have few issues listening for “hey Siri”.

Thus Apple has clearly invented the leading near-field Voice First device, they just may be on a very conservative path to transition to a full Voice First system. The elements to make AirPods as useful as Alexa is in the far-field are already at hand. The use cases for near-field devices are clear, fundamentally near-field is about more personal interactions and information. Examples are messages and financial interactions that you may not want to share in an open room.

The near-field will allow for a wide range of new and not yet thought of applications. This will span from areas that are consumer level to commercial and industrial level. Just in the commercial area I have surfaced over 1,200 direct use cases after studying this for over 30 years. The right technology has finally converged. One example of a commercial use case is for service workers to have instant access to any question simply by saying: “Let me ask our Voice AI system and check”. It is not hard to imagine any service situation where this can be applied.

We are in the very early days of the Voice First revolution the far-field is already becoming a mainstream technology. Yet we have yet to touch the surface of the possibilities the far-field can surface. The near-field is even earlier in its life and may very well wind up moving just as fast one the fundamentals are established. It is my view that Apple will show what I am calling Siri 3 and a more complete VoiceOS by the WWDC (World Wide Developer Conference) in June 2017. Along the way the other ~32 modalities will begin to surface. These are fast moving early days.

~-~

~-~

Subscribe ($99) or donate by Bitcoin.

Copy address: bc1q9dsdl4auaj80sduaex3vha880cxjzgavwut5l2

Send your receipt to Love@ReadMultiplex.com to confirm subscription.

IMPORTANT: Any reproduction, copying, or redistribution, in whole or in part, is prohibited without written permission from the publisher. Information contained herein is obtained from sources believed to be reliable, but its accuracy cannot be guaranteed. We are not financial advisors, nor do we give personalized financial advice. The opinions expressed herein are those of the publisher and are subject to change without notice. It may become outdated, and there is no obligation to update any such information. Recommendations should be made only after consulting with your advisor and only after reviewing the prospectus or financial statements of any company in question. You shouldn’t make any decision based solely on what you read here. Postings here are intended for informational purposes only. The information provided here is not intended to be a substitute for professional medical advice, diagnosis, or treatment. Always seek the advice of your physician or other qualified healthcare provider with any questions you may have regarding a medical condition. Information here does not endorse any specific tests, products, procedures, opinions, or other information that may be mentioned on this site. Reliance on any information provided, employees, others appearing on this site at the invitation of this site, or other visitors to this site is solely at your own risk.

Copyright Notice:

All content on this website, including text, images, graphics, and other media, is the property of Read Multiplex or its respective owners and is protected by international copyright laws. We make every effort to ensure that all content used on this website is either original or used with proper permission and attribution when available.

However, if you believe that any content on this website infringes upon your copyright, please contact us immediately using our 'Reach Out' link in the menu. We will promptly remove any infringing material upon verification of your claim. Please note that we are not responsible for any copyright infringement that may occur as a result of user-generated content or third-party links on this website. Thank you for respecting our intellectual property rights.